From Mainframe to Spec

Article

Every legacy modernization project starts with the same question: what does this system actually do?

For mainframe applications, answering that question is brutally hard. A typical COBOL application is not a collection of programs you can read in sequence. It is a system that spans multiple execution environments, data storage paradigms, scheduling layers, and communication protocols — all tightly coupled in ways that are invisible from any single source file.

Most modernization approaches handle this in one of two ways: they either throw consultants at the problem (expensive, slow, error-prone) or they throw an LLM at the raw source code and hope for the best (fast, cheap, unreliable). Both approaches share the same fundamental flaw: there is no way to know if the resulting specification is correct.

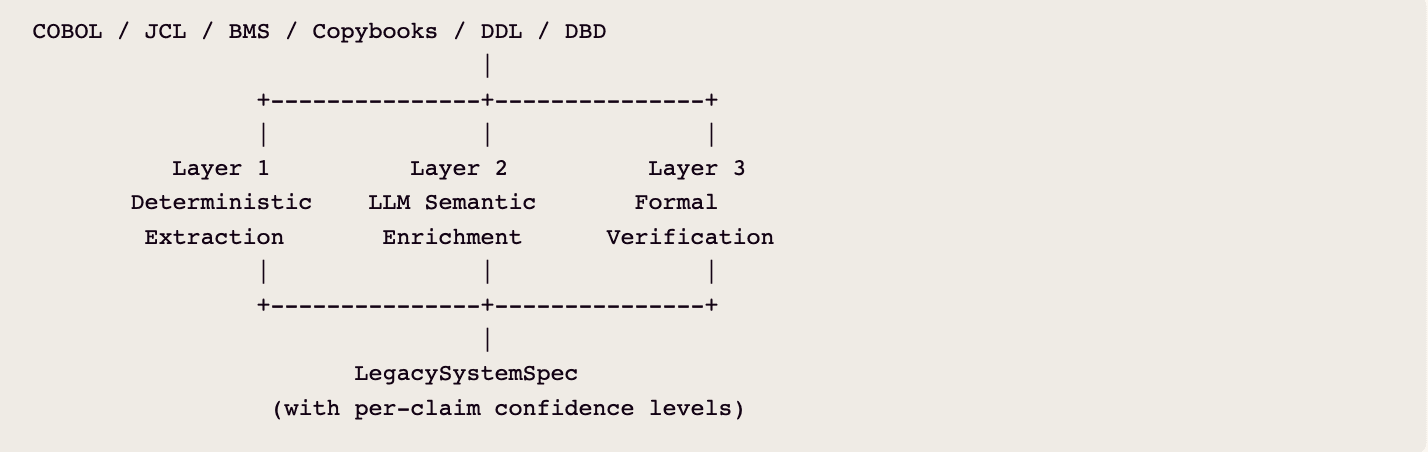

Glover takes a different approach. We combine deterministic code analysis, LLM-powered semantic reasoning, and formal verification into a pipeline that produces specifications you can actually trust — and tells you exactly which parts of the spec are mathematically proven, which parts are LLM-inferred, and which parts have no coverage at all.

Three Layers of Understanding

The pipeline processes COBOL, JCL, BMS maps, copybooks, DDL, and IMS database definitions through three sequential layers, each with a distinct role:

Layer 1 extracts everything that can be extracted without interpretation: program structure, data layouts, call graphs, file relationships, SQL table references, CICS resource bindings, and JCL job flows. This layer never hallucinates, but it cannot reason about intent.

Layer 2 uses LLM agents with full codebase access to discover business domains, trace user journeys, identify invariants, and assemble a coherent specification. This layer can reason about intent, but it can be wrong.

Layer 3 independently transpiles COBOL business logic into annotated Python and runs symbolic verification against the spec. This layer provides mathematical proof for the claims it can reach, and honest silence for the claims it cannot.

The result is a specification where every claim carries a provenance tag: deterministically extracted, LLM-inferred, or formally verified. A modernization team can trust the proven claims absolutely, review the inferred claims with appropriate skepticism, and prioritize manual review for the gaps.

Layer 1: Deterministic Extraction

The first step is structural parsing of every COBOL program using the ProLeap COBOL grammar, producing a full abstract syntax tree. From the Data Division, the pipeline extracts the complete data layout — PIC clauses, REDEFINES relationships, level-88 conditions, USAGE types. From the Procedure Division, it extracts every CALL, PERFORM, EXEC CICS, EXEC SQL, and EXEC SQLIMS statement as structured, typed data — not raw text. COPY references establish shared data dependencies across programs.

The parsed AST feeds two deterministic graph extractors. The call/resource graph captures program-level relationships: which programs call which, which copybooks they share, which external resources they touch. CICS exec blocks are analyzed via pattern matching to extract BMS screen references, CICS transaction identifiers, and VSAM file bindings. The data flow graph adds operation-level detail — not just which resources a program touches, but whether it reads, writes, updates, or deletes them. REDEFINES relationships are captured as edges.

COBOL programs do not exist in isolation. The pipeline discovers and processes JCL files (batch orchestration), BMS files (terminal screen definitions), DDL files (database schemas), and DBD/PSB files (IMS database definitions). The result is a graph that captures not just the COBOL programs but the environment they execute in — including the JCL step sequencing and dataset allocations that often contain business logic the COBOL itself doesn't touch.

Layer 2: LLM Semantic Enrichment

Deterministic extraction tells you what the code does. It does not tell you what the code means. A PERFORM CALCULATE-INTEREST is a control flow edge in a graph; understanding that it implements a declining-balance amortization schedule requires reading the code and reasoning about its purpose. This is where LLM agents enter the pipeline — not as replacements for structural analysis, but as interpreters of it.

Every file passes through an LLM analysis step that produces structured output: a technical summary, data flow analysis, dependency enumeration, and business logic extraction. The analysis prompt carries file-type-specific instructions — COBOL files are analyzed differently from JCL, which are analyzed differently from BMS maps. For JCL specifically, the analysis covers JOB cards, EXEC statements, DD allocations, and conditional execution logic, because business logic in mainframe systems frequently lives outside the COBOL — a DFSORT control statement may implement filtering or aggregation that the downstream COBOL program depends on.

JCL receives additional treatment through a dedicated agentic analysis loop. The agent follows the execution chain from JCL into the connected COBOL programs, producing a Business Requirements Document and then a technology-agnostic functional specification. For batch processing chains where the business logic is distributed across JCL step sequencing, utility program configurations, and COBOL program logic, no single artifact contains the full picture — the agent assembles it.

Domain discovery uses deterministic graph clustering to identify natural boundaries in the codebase, then uses an LLM to name and characterize each domain. Journey cataloging traces the paths through the system that represent coherent business processes: online CICS terminal transactions, JCL batch chains, MQ-driven event flows, and time-based scheduling. Each journey is elaborated with an LLM agent that can read source files, search the codebase, and grep for patterns.

Spec assembly runs through a chain of focused LLM steps — domain flows, entity schemas, invariants, user journeys, technical architecture, non-functional requirements, and gap analysis — each with agentic codebase access. After assembly, a coverage engine checks every program, file, data store, and external system reference in the structural graph against the spec, producing a coverage score and flagging uncovered components for manual review.

Layer 3: Formal Verification

Layers 1 and 2 produce a specification. Layer 3 provides the proof — or the counterexample.

The formal verification pipeline operates independently of the LLM-generated spec, taking the same COBOL source code through a deterministic chain. Deep AST extraction captures every statement type in the Procedure Division: MOVE, COMPUTE, arithmetic verbs, IF/EVALUATE conditionals, PERFORM loops, and file I/O verbs. Rule-based transpilation — no LLM involved — converts this into annotated Python. Data Division items become typed dataclass fields. Level-88 conditions become boolean properties. Paragraphs become methods. Arithmetic verbs become Decimal operations with fixed-point precision matching COBOL's truncation rules.

Contract annotation attaches formal invariants derived from COBOL semantics: PIC clauses generate range and length constraints, level-88 conditions generate valid-value-set invariants, ON SIZE ERROR generates overflow bounds, and PERFORM UNTIL generates loop termination contracts. CrossHair — a symbolic execution engine backed by Z3 — then evaluates each contract across all possible input paths, not just test cases. A contract is marked VERIFIED if it holds for all inputs, or COUNTEREXAMPLE if CrossHair finds a specific input that violates it.

Verified properties map back to the LLM-generated spec, annotating each claim: FORMALLY_BACKED, UNCOVERED, CONTRADICTED, or GAP_FOUND.

What formal verification handles well: pure algorithmic business logic — interest calculations, date arithmetic, account balance validations, eligibility checks. Self-contained computations that operate on in-memory data are exhaustively verifiable.

What it handles partially: data validation and constraint checking. PIC clause ranges and level-88 value sets are verified as invariants on the data model, but only for the program's internal representation.

What it cannot reach: CICS lifecycle patterns, where pseudoconversational programs terminate and restart between interactions with state in a COMMAREA — an execution environment behavior, not a program behavior. External data store interactions (EXEC SQL, EXEC CICS FILE, EXEC SQLIMS) are transpiled as stubbed methods with boundary contracts. JCL orchestration logic, concurrency and lock contention, and BMS screen definitions fall outside the transpilation boundary.

This is not a limitation we hide. It is a limitation we surface. The verification report explicitly categorizes every spec claim by the evidence behind it, so modernization teams know exactly where the mathematical proofs end and where human judgment begins.

The Trust Hierarchy

The three layers form a trust hierarchy where each checks and extends the others.

Layer 1 grounds Layer 2. The deterministic graph provides the skeleton that LLM enrichment fills in. When the LLM discovers a "daily batch settlement process," it is not hallucinating from thin air — it is interpreting a path through the call graph that connects specific JCL steps to specific COBOL programs to specific VSAM files. The structural evidence is traceable.

Layer 2 contextualizes Layer 3. Without the LLM-generated spec, the verifier produces a list of verified properties with no connection to business meaning. With it, the verifier can answer: the spec says this program enforces a minimum balance of $25 — here is the formal proof that it does (or the counterexample showing it doesn't).

Layer 3 audits Layer 2. A CONTRADICTED claim means the LLM got something wrong — with a specific counterexample proving it. A GAP means the LLM missed something the code actually does. Both feed back into the specification as corrections, with mathematical evidence attached.

After all three layers have run, the coverage engine reports: what percentage of the codebase is described in the spec, what percentage of spec claims are formally verified, what percentage are LLM-inferred only. The numbers tell a modernization team whether the spec is ready, where to focus manual review, and what risk they are accepting if they proceed.

What This Means for Modernization

Traditional mainframe modernization treats specification as a human-driven phase written once by consultants who read code, interview subject matter experts, and produce a document. If the document is wrong, the modernized system is wrong — and nobody finds out until UAT, months or years later.

Glover's pipeline processes a full COBOL codebase in hours and produces a complete specification with coverage scores and verification results in a single automated run. Every claim in that spec carries its provenance. Because the pipeline is automated, the specification can be regenerated when understanding improves — a domain expert identifies an error, the correction feeds back in, and formal verification re-checks the affected claims automatically. The spec is a living artifact, not a frozen document.

The goal is not to eliminate human judgment from legacy modernization. It is to focus human judgment on the places where it is actually needed — the CICS lifecycle patterns, the concurrency edge cases, the undocumented business rules that exist only in a retired developer's memory — rather than wasting it on the thousands of straightforward computations that a machine can verify mathematically.

Want to see what Glover extracts from your COBOL? Book a demo.