The Archaeologist: A Structured Approach to AI-Driven Legacy Modernization

Article

Most AI-assisted modernization treats legacy code as a prompt engineering problem — feed code to an LLM, get new code out. Frameworks like BMAD orchestrate AI personas through development phases; OpenAI's Symphony turns kanban tasks into autonomous coding runs; the Ralph Loop keeps agents iterating until done. All three are genuinely effective for greenfield development and well-specified tasks. None of them solve the foundational problem of legacy modernization: understanding what the existing system actually does before writing a single line of replacement code.

Glover Labs takes a different approach. The Archaeologist begins with deterministic structural extraction — parsing, cross-referencing, and vectorizing source code before any LLM sees it. Spec generation is a 13-step pipeline, not a single prompt. A multi-tier coverage engine then compares the generated spec against the actual codebase — verifying that every entity, relationship, state variable, and business rule is accounted for, and surfacing discrepancies where the same logic is implemented differently across layers. The resulting canonical spec enables iterative refinement with domain experts, then feeds a code generation pipeline that decomposes work into independently verified units with test gates, self-healing loops, and build-time architectural enforcement. Every line of legacy code is accounted for. Every line of generated code is tested.

01 — Spec Generation: Understanding Before Generating

The first instinct when modernizing legacy code is to paste it into an LLM and ask "what does this do?" That fails for systems of any real complexity — a large enterprise application spanning multiple languages, database layers, and UI frameworks cannot be understood in a single prompt.

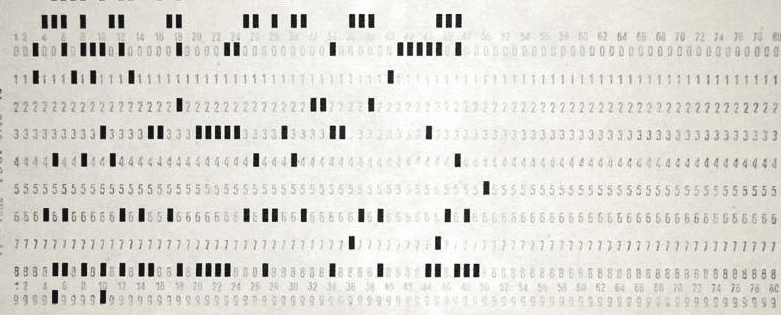

The Archaeologist starts with deterministic structural extraction. Language-specific parsers run in parallel — extracting classes, methods, dependencies, UI components, configuration, database schemas, and stored procedures into structured, typed data. The pipeline already supports .NET and COBOL codebases, with additional language profiles pluggable via the same architecture. A cross-reference resolution step links these extracts: dependency injection patterns, page navigation, inter-file references. A vectorization step generates semantic embeddings for every code chunk, enabling similarity-based retrieval downstream.

Only after this deterministic foundation is the LLM involved — and not as a single "explain this code" prompt. Spec generation is a 13-step pipeline where each step has a narrow scope: domain discovery via behavioral clustering, entity enrichment, business invariant extraction, technical specification, non-functional requirements, gap identification, user journey mapping, UI analysis, and final assembly into a canonical spec. Each step receives typed, validated context from prior steps and produces structured output — not free text. Every step checkpoints, enabling recovery without recomputation.

The result is a spec built incrementally, each layer informed by the last, rather than hallucinated in one pass.

02 — Multi-Source Discrepancy Detection

Legacy systems are built over decades by different teams with different assumptions. A field might be nullable in the database but only validated in the UI. A business rule might live in a stored procedure that the application layer doesn't know about. A state variable might be set on one screen and consumed on another with no documented contract between them.

The coverage engine systematically detects these discrepancies across multiple tiers — matching UI pages, entities, and domains between the codebase and spec; checking entity relationships across domain boundaries; validating state management patterns using both exact matching and vector similarity as a fuzzy fallback; and verifying that navigation paths and integration points are fully documented.

Where gaps are found, they're surfaced explicitly — unmatched state, unmapped relationships, undocumented integrations — with per-domain coverage percentages. An LLM summarizing code will miss the state variable set on one screen and consumed on another with no direct reference between them. The coverage engine won't.

03 — Evals: Verifiable Confidence

Coverage analysis verifies the spec. The evals framework verifies the generated code.

Acceptance testing runs in three phases: parallel browser-based agents execute grouped criteria against the running application in Docker; an independent verification agent re-checks from a separate workspace; and a self-healing loop generates targeted fixes, rebuilds, and retests — up to ten iterations. During code generation, test gates run after every work unit, not just at the end. Each unit declares which acceptance criteria it covers, so testing is cumulative — a bug in the data layer surfaces immediately, not after six more layers are built on top of it.

04 — Canonical Specs and Iterative Refinement

The pipeline produces two canonical artifacts: a legacy spec (what the system does today) and a modern spec (what the replacement should do). Both are versioned, checkpointed, and recoverable.

A domain expert can review the spec, see that a particular business rule has been faithfully documented from the legacy code, and say: "That rule was a bug we've lived with for 15 years. The correct behavior is X." They refine through a chat interface — editing domains, entity schemas, user journeys, gaps — and those changes persist into the modern spec that code generation consumes.

Without a canonical spec, modernization is a one-shot bet. With one, domain experts guide the target state incrementally. The 40-year-old workaround doesn't have to be faithfully replicated — it can be corrected.

05 — Code Generation: Orchestrated, Not Ad Hoc

Glover Labs' code generation engine doesn't operate as a single long-running agent session. It's a multi-phase pipeline with structure at every level.

Scaffolding generates the project skeleton plus a set of platform modules — observability, security, data access, testing — that domain code depends on at compile time. Architecture tests enforce their usage deterministically: if generated code bypasses the platform patterns, the build fails and the self-healing loop corrects it. This is build-time enforcement, not LLM-powered review.

Implementation decomposes each milestone into work units ordered by layer with validated dependency graphs. Each unit runs as a separate agent session with a fresh context window — eliminating the quality degradation that occurs when a single agent tries to maintain coherence across hundreds of turns. A project constitution — synthesized from the spec's invariants, non-functional requirements, and architectural decisions — is injected into every session. Structured reference documents replace monolithic spec files, so agents read only what they need.

After each work unit, tests run programmatically. Failures trigger a fix agent with real test output, not vague feedback. Each unit produces a separate commit, enabling resume from any point.

06 — How This Compares

BMAD Role-based AI orchestration — Analyst, Architect, Developer personas through a four-phase cycle. Effective for greenfield projects where the problem is already understood. It doesn't address the core legacy challenge: no deterministic analysis, no coverage verification, no canonical spec.

The Ralph Loop Keeps Claude Code iterating autonomously until completion. Powerful for mechanical tasks with clear criteria. The criteria themselves, though, have to be known in advance — it has no mechanism for discovering them from a system it hasn't seen.

Symphony Orchestrates kanban tasks into autonomous implementation runs with fault-tolerant infrastructure. Impressive at execution. But it assumes tasks are already well-specified; it doesn't answer "what does this system actually do?"

Claude Code + Engineers Effective when engineers already understand the codebase. Doesn't solve: understanding a system across hundreds of files, discovering cross-cutting inconsistencies, producing a verifiable spec, or maintaining architectural consistency across milestones.

These tools optimize for generation. The Archaeologist's differentiation is verified understanding — the deterministic extraction, multi-step spec pipeline, coverage engine, and canonical representation that must precede generation for legacy modernization to be reliable.

07 — Extensibility

The same pipeline structure can ingest screen recordings, process documentation, or interview transcripts and produce a process specification. Deterministic extraction becomes video frame analysis or document parsing; the multi-step pipeline discovers process flows; the coverage engine verifies completeness.

Target state flexibility matters here too. The modern spec isn't locked into "rewrite from scratch." If the right answer is Salesforce for CRM, ServiceNow for workflow, and custom code only for domain-specific logic — the spec expresses that. Code generation produces only the custom components and integration glue. This is how real modernization works.

08 — Application: Banking Operations

Consider a bank's AML and compliance operations on legacy systems. Rule engines built over decades, regulatory logic scattered across mainframes and batch jobs, newer layers bolted on for the latest regulatory change. The people who wrote the original rules have retired. Documentation doesn't match what the code does. Overlapping checks with subtly different thresholds.

The coverage engine surfaces where the same regulatory check is implemented differently across pathways, where a threshold was updated in one layer but not another. The canonical spec becomes an auditable artifact — compliance officers review documented behavior, flag discrepancies against current requirements, and refine the spec before code generation begins. They're active participants in defining the target state, not passive recipients.

The generated system inherits verified business rules, tested behavioral coverage, and architectural consistency — audit trails, encryption, structured logging enforced as build-time constraints rather than afterthoughts.

This is not a problem solved by handing engineers a coding assistant and a deadline. It requires verified understanding first.

Want to see The Archaeologist applied to your codebase? Book a demo.