The Figure-8 Loop: An AI Architecture for Modernization

Articles

Many modernization efforts fail and almost all of them are long and painful. Not because the engineers were bad at their jobs or the target architecture was wrong, but because the journey from old to new is usually based on an incomplete picture.

This is because encoded into the billions of lines of COBOL, PL/I, RPG, and legacy Java we rely on every day are decades of institutional understanding, decisions and deviations. Business rules that were never formally documented. Workarounds that became policy. Edge cases that exist only in the memory of engineers who are retiring. Modernization isn't just about the code. It's about the code, the data, the processes, the people, and the undocumented decisions that bind them all together.

AI should make all of this vastly easier. And in many ways, it already is. AI agents can analyze codebases, generate test suites, refactor modules, and translate between languages at speeds no human team could match. The modernization market has already crossed $24 billion and is accelerating toward $57 billion by 2030. Gartner predicts that by 2026, 40% of enterprise applications will incorporate AI agents, up from less than 5% in 2025. The tooling is arriving fast.

But AI's inherent capabilities can't reason about what they don't know and they can't coordinate around around decisions that haven't been made.

The Coordination Gap

Here's what happens when you deploy multiple AI agents against a legacy codebase without a coordination architecture: they mostly work! Often Impressively! An agent analyzes a module and produces a migration spec. Another generates tests. A third refactors code into a modern target. Each one performs its task with remarkable capability.

And then everything falls apart.

The analysis agent discovered a critical dependency that the refactoring agent didn't know about. The test generation agent wrote assertions against a business rule that had already been superseded — but the decision to change it was made in a Slack thread three weeks ago and never recorded anywhere the agents could see. The migration spec assumed a microservice decomposition that conflicts with a compliance requirement buried in a document that no one digitized.

This isn't a hypothetical. This is the natural outcome of agent-to-agent coordination without a system of record. Decisions get lost. Context bleeds away. Errors compound silently. And the humans who are supposed to be overseeing the process have no single place to look to understand what's been decided, what's been done, and what's gone wrong.

McKinsey's 2025 State of AI report found that the single strongest predictor of enterprise-level AI impact is whether an organization fundamentally redesigned its workflows when deploying AI — not the sophistication of the models themselves. Deloitte's enterprise AI research echoed the finding: 60% of organizational leaders identified legacy system integration as their primary challenge in scaling AI, and the most successful organizations were those building what Deloitte called a "living AI backbone" — an organization-wide, real-time system that adapts dynamically to business and regulatory change.

The data points in the same direction: the bottleneck isn't AI capability. It's AI coordination. For modernization specifically, it's the absence of a persistent, bidirectional system of record that connects what exists today to what you're building tomorrow.

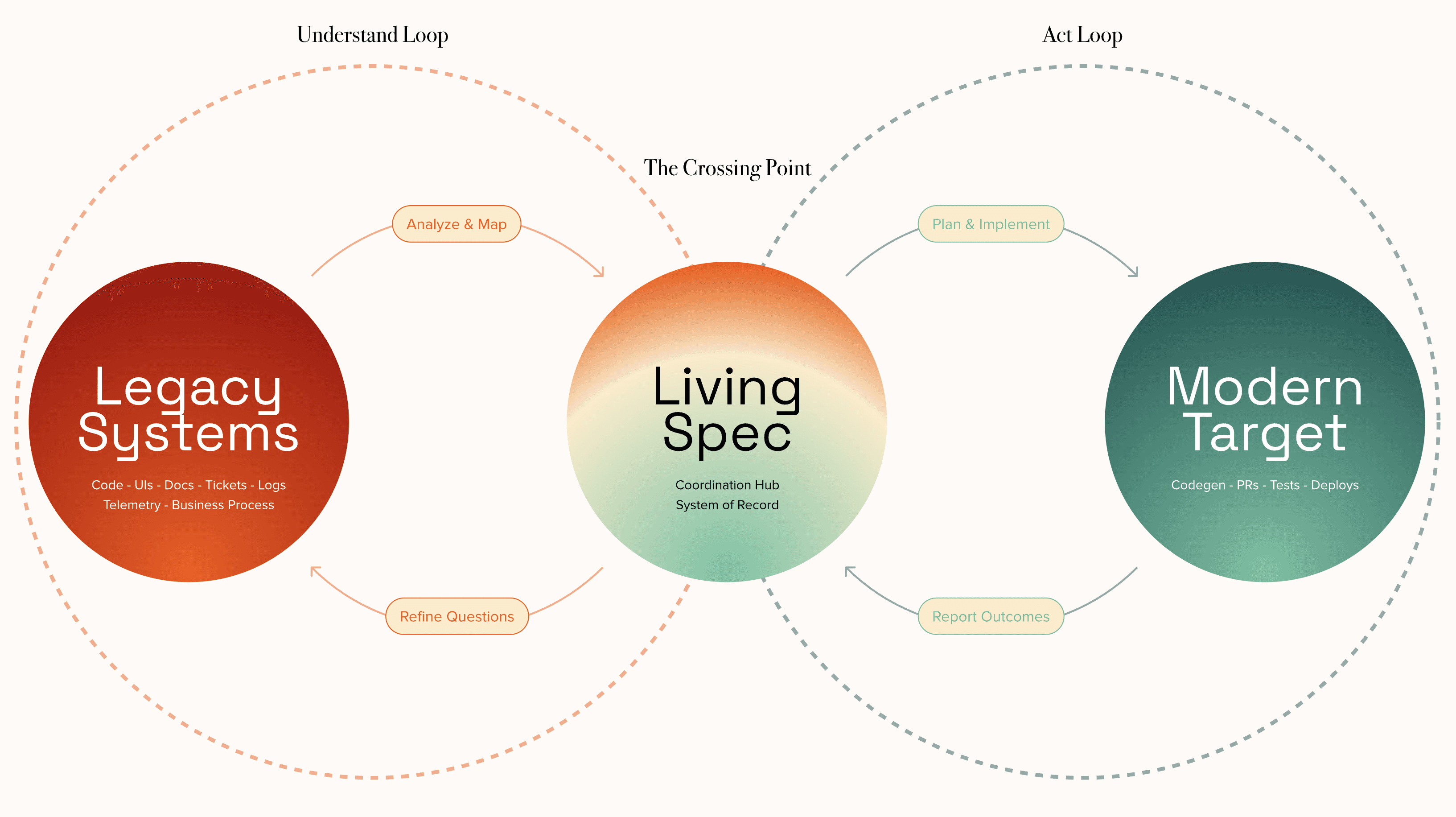

Enter the Figure-8

At Glover Labs, we've spent the last year working on this exact problem. The result is an architecture we call the Figure-8 agent loop — a coordinated dual-loop system that, we believe, is the right structural answer to AI-driven legacy modernization.

The concept draws on a principle that organizational theorists have understood since Chris Argyris introduced double-loop learning in the 1970s: single-loop systems correct errors within existing assumptions; double-loop systems question and revise the assumptions themselves. In Argyris's terms, a thermostat that turns on the heat is single-loop learning. A thermostat that asks whether the temperature setting is right — that's double-loop.

Legacy modernization desperately needs double-loop thinking. It's not enough to translate COBOL to Java line by line (single-loop). You need to continuously re-examine whether the business logic being translated is still correct, whether the target architecture still makes sense given what you've discovered, and whether the decisions made yesterday still hold given what you learned today.

The Figure-8 operationalizes this. Here's how it works.

Loop One: Understand. A coordinated group of AI agents continuously reads and analyzes the legacy estate — code, user interfaces, documentation, databases, tickets, logs, and subject-matter expert knowledge. This isn't a one-time discovery phase. It's a persistent, ongoing process that feeds structured understanding into a central artifact we call the Living Spec.

Loop Two: Act. A second group of agents reads from the Living Spec and executes modernization work — generating code, writing tests, producing pull requests, building out the target architecture. Every decision, outcome, and blocker is reported back to the Living Spec.

The Crossing Point: The Living Spec. This is the critical architectural. The Living Spec sits at the intersection of the two loops — the figure-eight's crossing point. It is a single, persistent, bidirectional, versioned system of record that maps the as-is legacy state to the target end-state. Agents don't coordinate with each other directly. They coordinate through the Spec.

Here's what that looks like in practice.

An agent in the Understand loop analyzes a legacy module and determines it contains three distinct bounded contexts that should become separate services. That finding — with rationale and source citations — gets written to the Spec. Later, an agent in the Act loop picks up one of those services and starts implementing it. Midway through, it hits an unexpected dependency that changes the decomposition strategy. That discovery flows back through the Understand loop. The Spec updates. Both loops turn. The map stays current.

Why the Living Spec Is Important For Enterprises

The reason enterprises struggle to adopt AI-driven modernization isn't skepticism about AI's capability — it's the absence of trust infrastructure. A CISO needs to know that every automated decision has an audit trail. A CTO needs to know that human engineers can intervene at any point without losing context. A compliance officer needs to know that the system of record for what was decided and why actually exists.

The Figure-8 architecture provides this by design, not as an afterthought.

Every decision is documented and queryable. When an agent decides to decompose a legacy module into three microservices, the rationale, the source evidence, and the confidence level are written to the Spec. Six months later, when someone asks "why did we split this module?" the answer is there — with citations back to the legacy code, the ticket history, and the SME interview that informed the decision. This isn't logging. It's institutional memory that actually works.

Accountability is continuous, not retroactive. Because agents coordinate through the Spec rather than through ephemeral peer-to-peer communication, there is a versioned, auditable trail from legacy analysis through to generated code. A human reviewer can trace any line of generated code back through the decision chain that produced it — what was understood, what was decided, and why. Nothing happens in the dark.

The gap between as-is and target is always quantified and current. The Spec continuously maps legacy reality to the modern target. As agents discover new complexity or make implementation decisions, both sides of the map update. At any point, a stakeholder can ask: how much of this system have we analyzed, how much have we migrated, what changed since last week, and what risks have emerged? The answer is never "let me get back to you."

This is the difference between a research demo and a product that regulated industries can actually deploy.

What We're Building Differently

The prevailing approach in the modernization space — from specific engineering tools to the big outsourced engagements — is to ingest code and produce artifacts: static documentation, architectural models, translated source files. These artifacts are useful, but they are snapshots. They represent understanding at a moment in time. They go stale the instant the underlying system changes, a new dependency is discovered, or a business rule is reinterpreted.

Glover Labs takes a different approach, and the differences compound.

We don't just read code. We ingest code, UIs, documentation, support tickets, operational logs, and subject-matter expert knowledge. The Understand loop has a richer picture of the legacy estate because it draws from every source of institutional knowledge, not just the repository. Competitors that ingest code alone are working with a partial map — and partial maps produce partial modernizations.

The Living Spec itself is unlike anything else in the market. Static documentation tools produce read-only artifacts. The Living Spec is read-write. Both loops feed it. Both loops draw from it. It functions as coordination hub, audit trail, and progress tracker in a single queryable structure — a role that no static document, no matter how detailed, can fill.

And the way agents coordinate matters more than most people realize. Research on multi-agent systems — from Atlassian's HULA framework to recent work on iterative AI refinement loops — consistently shows that structured feedback mechanisms outperform unstructured agent-to-agent communication. We took that finding and made it the core design principle: agents never talk to each other. They talk to the Spec.

The Moment We're In

The legacy modernization market is at an inflection point. The tools are powerful enough. The models are capable enough. But the coordination architectures haven't caught up.

Most organizations are still deploying AI agents the way they deployed consultants fifteen years ago — one workstream at a time, with coordination happening in meetings and shared documents that no one reads. That approach didn't scale then, and it doesn't scale now.

The fix isn't better agents. It's a better way for agents to share context, record decisions, and maintain accountability across the full lifecycle of a modernization program.

That's what we built the Figure-8 to do.

We think dual loops coordinating through a persistent, bidirectional system of record will become the standard pattern for enterprise-scale AI modernization. Not because the architecture is elegant — though it is — but because the alternative is agents working in the dark, compounding each other's errors, with no one able to tell you what happened or why. Enterprises don't buy that. They shouldn't have to.

If your organization is sitting on decades of legacy systems and wondering how AI fits into the modernization picture, we'd love to talk. Not about what AI can do in a demo — about what it takes to actually ship a modernization program that your CTO, your CISO, and your board can trust.